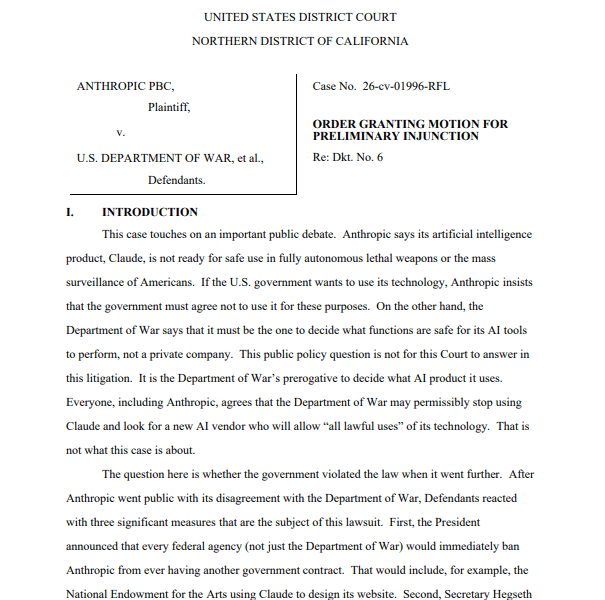

A US federal judge in San Francisco has temporarily blocked the Pentagon’s ban on Anthropic, granting the AI company a preliminary injunction. The order also halts President Donald Trump’s directive instructing federal agencies to stop using Anthropic’s chatbot, Claude, after the company was labeled a national security supply chain risk.

Judge Rita Lin of the Northern District of California criticized the government’s actions as “arbitrary, capricious, [and] an abuse of discretion,” emphasizing that no statute allows branding a US company as a potential adversary for expressing disagreement with federal policy. The ruling followed a lawsuit filed by Anthropic in early March, arguing that the Pentagon and Trump administration overstepped authority and retaliated against the company for its public statements.

Background: Anthropic’s Military Contract and Restrictions

The dispute originated from a July 2025 contract between Anthropic and the Pentagon, which aimed to approve Claude for use on classified networks. Negotiations collapsed in February after the Pentagon sought unrestricted use of the AI for all lawful purposes, including military applications. Anthropic refused, citing ethical limits on lethal autonomous weapons and mass domestic surveillance.

Implications for AI and Federal Policy

Anthropic, a leading enterprise AI firm with 32% market share in 2025, argued that the government’s punitive measures constituted illegal First Amendment retaliation. The injunction preserves its ability to operate in federal systems while the case proceeds, marking a significant check on federal authority over AI contractors.

Disclaimer

This content is for informational purposes only and does not constitute financial, investment, or legal advice. Cryptocurrency trading involves risk and may result in financial loss.