Photo: Illustrative

Google Warns AI-Powered Hackers Used Zero-Day Attack to Bypass Two-Factor Authentication

Google’s Threat Intelligence Group has reported what it believes is the first known case of hackers using artificial intelligence to develop a zero-day exploit, raising fresh concerns about how cybercriminals are adapting advanced AI tools.

.jpeg)

Google’s Threat Intelligence Group has reported what it believes is the first known case of hackers using artificial intelligence to develop a zero-day exploit, raising fresh concerns about how cybercriminals are adapting advanced AI tools.

According to Google, threat actors worked together to plan a large scale vulnerability attack targeting a widely used open-source, web-based system administration tool. The exploit reportedly allowed attackers to bypass two-factor authentication (2FA), a security feature commonly used to protect online accounts, including crypto wallets and exchanges.

How the Zero-Day Vulnerability Worked

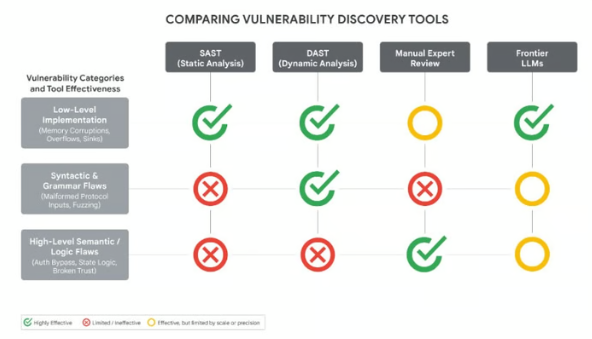

The attack still required valid login credentials, but hackers managed to bypass the second layer of security. Google said the flaw was not caused by a basic coding mistake but instead involved a high-level semantic logic flaw, where developers had built in a trust assumption that attackers could exploit.

Researchers said they have “high confidence” the hackers used an advanced large language model (LLM) to discover and weaponize the vulnerability. Evidence included AI-style formatting and even a hallucinated code element commonly linked to machine learning models.

Growing Use of AI in Cybercrime

Google noted that cybercriminals are increasingly using AI to improve hacking methods, evade detection, and automate attacks. Malware families such as PROMPTFLUX, HONESTCUE, and CANFAIL are already using language models to hide malicious code and avoid cybersecurity defenses.

The company also warned that threat actors are now industrializing access to premium AI systems by using pooled accounts, stolen API keys, and anti detect tools to scale malicious operations.

As more businesses adopt AI systems, experts warn that connected tools, third-party integrations, and automated features may become key targets for future cyberattacks.

Live market reaction

Disclaimer

This content is for informational purposes only and does not constitute financial, investment, or legal advice. Cryptocurrency trading involves risk and may result in financial loss.

Start trading

with BloFin today

Up to $500 sign-up bonus and zero-fee trading on your first 30 days.

Buy crypto nowⓘ You will be redirected to BloFin

About the author

.jpeg)

Emerging voice in crypto journalism with a background in fintech and digital economics. Covers DeFi, NFTs, and the evolving regulatory landscape.

Three Men Charged in US Crypto “Wrench Attack” Kidnapping Spree Targeting Investors

Ark Invest Buys $5.5 Million in Circle Shares as Strong Q1 Earnings Push Stock Higher

Why Ethereum Price Remains Below $2.4K Despite Multiple Breakout Attempts